What OpenClaw Teaches Us About the Future of Building Software

Peter Steinberger's OpenClaw isn't just the hottest open-source project of 2026. It's a blueprint for how a single engineer can ship like a team, and why taste and architecture now matter more than raw coding skill.

What OpenClaw Teaches Us About the Future of Building Software

There’s a phrase that’s been rattling around the tech world lately: “I ship code I don’t read.” It sounds provocative, maybe even reckless. But when you look at who said it and what they’ve built, it becomes one of the most important statements about where software engineering is heading.

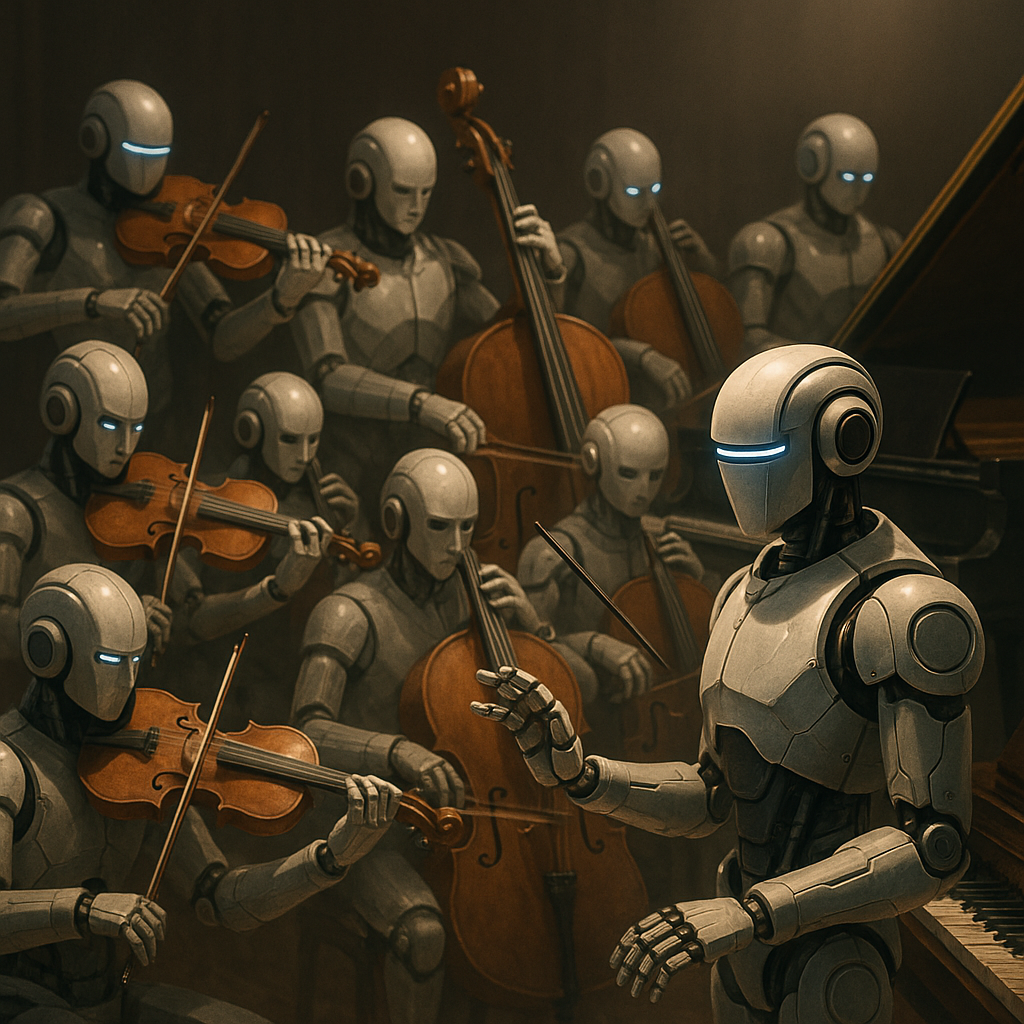

Peter Steinberger, the creator of OpenClaw, made over 6,600 commits in a single month. Alone. No team. No engineering org. Just one person orchestrating AI agents like a conductor leading an orchestra. The result? The fastest-growing open-source project in GitHub history, blowing past 200,000 stars in its first weeks and outpacing Google searches for Claude Code and Codex combined.

This isn’t just a cool project. It’s a signal. And if you’re building software in 2026, you need to pay attention.

Who Is Peter Steinberger?

Before OpenClaw, Steinberger built PSPDFKit, a PDF rendering framework that ended up powering over a billion devices. He scaled that company to 70+ employees and achieved a $100M exit. The man already won the traditional software game.

Then he stepped away for three years.

When he came back, the world had changed. Large language models had matured. AI coding tools had gone from novelties to necessities. And Steinberger, instead of building another product company with a sales team and SaaS metrics, did something different. He built OpenClaw: a personal AI assistant that started as “Clawdbot” and became the most talked-about open-source project of the year.

What’s remarkable isn’t just what he built. It’s how he built it.

The “I Ship Code I Don’t Read” Philosophy

This statement sounds like chaos, but it’s actually deeply disciplined. Here’s what Steinberger actually means:

He doesn’t manually review every line of code. Instead, he orchestrates AI agents that generate, test, lint, compile, and validate their own output before it ever reaches the main branch. The agents close their own feedback loops. Think of it like managing a team, but the team members are AI agents running in parallel, each one handling a piece of the system.

His workflow looks something like this:

He runs 5 to 10 agents simultaneously, each working on different parts of the system. When something fails, the agent reads the error, fixes its own code, and retries. Humans set direction and quality standards. Agents handle the iterative grind.

The key insight? Managing a 70-person company at PSPDFKit prepared him for this. Running agents well requires the same skill as managing engineers: you have to release your need for perfectionism, trust the process, and focus on outcomes over implementation details.

Why OpenClaw Exploded

There’s a reason this project hit 100,000+ stars in its first week. OpenClaw isn’t just another AI wrapper. It represents a fundamentally different philosophy about what software tools should be.

The Anti-SaaS Model

Traditional SaaS companies measure success by retention. How long can you keep users inside your interface? How many features can you add to make them dependent?

Steinberger flipped this entirely. His philosophy: the goal of an AI tool should be to let the user exit the app as quickly as possible.

OpenClaw is local-first. Your data never leaves your device. There’s no cloud dependency, no subscription trap, no startup analyzing your files. It’s a tool built by an expert developer with zero interest in “capturing” users.

| Traditional SaaS | OpenClaw Model |

|---|---|

| Cloud-dependent | Local-first |

| Retention-focused | Exit-as-fast-as-possible |

| Data leaves your device | Data stays on your machine |

| Feature-as-a-Service | Gateway to capabilities |

| Vendor lock-in | Open source, swap anything |

Architecture: Not a Framework, a Gateway

OpenClaw isn’t a framework. It’s a gateway, a single runtime that sits between your AI model and the outside world. When you run the gateway, you start a single Node.js process. That’s it. No service mesh, no message broker, no distributed state.

Everything connects through this gateway: channel adapters for WhatsApp, Telegram, Discord, Slack, iMessage, Signal, and more. The CLI. The web UI. Mobile apps. Peripheral device nodes. One port, one process, everything unified.

The Lane Queue: Solving Agent Chaos

One of the most clever architectural decisions is the Lane Queue system. Every session gets its own serial queue, keyed by workspace:channel:userId. This prevents race conditions and concurrent writes to shared state, an entire class of bugs that plague most agent systems.

The principle: default serial, explicit parallel. If you want parallelism, you opt into it through additional lanes for background tasks. But the default is safe, predictable, and correct.

Skills as Markdown

Here’s where it gets wild. OpenClaw’s extensibility system uses YAML-frontmatter markdown files instead of code plugins. Skills are human-readable, hot-reloadable, and simple enough that agents can write and deploy their own skills mid-conversation.

This is a massive barrier reduction. You don’t need to be a developer to extend OpenClaw. You need to be able to write a markdown file.

The Real Lesson: Code Is a Commodity Now

This is the part that matters most for anyone building software today.

When an AI agent can rewrite your entire backend while you’re getting coffee, the value of manually writing code drops to near zero. The new premium is on two things:

1. Taste

The intuitive ability to distinguish between something that merely works and something that’s elegant and maintainable. This isn’t something you can automate. It’s the accumulated judgment of years of building things, using things, and caring about the experience of the people who interact with your systems.

2. Architecture

The skill of designing systems that are resilient, extensible, and coherent. You’re no longer laying bricks. You’re the architect ensuring the building doesn’t lean, and the gardener ensuring the experience feels right.

The question for our generation of builders is no longer “How do I build this?” It’s: “What is actually worth building when the machine can build everything?”

What Steinberger Gets Right About Agent Development

His approach challenges several software engineering norms:

Upfront Planning Is Back

Contrary to the “move fast, iterate” agile culture, Steinberger’s workflow is closer to what you might call architect-first development. He spends significant time on high-level design, shaping the vision, defining the constraints, before letting agents execute. It’s almost a return to waterfall planning, but the execution phase happens at machine speed.

PRs Become “Prompt Requests”

He values seeing the prompts that generated the code more than the code itself. If you understand the intent and the instructions, you can always regenerate or refine the output. The prompt is the source of truth, not the code.

Under-Prompting on Purpose

Sometimes he gives deliberately vague prompts. Not because he’s lazy, but because letting the AI explore can surface solutions he wouldn’t have thought of. The constraint is that the feedback loop must be tight enough to catch bad outputs immediately.

Outcome-Oriented Engineers Thrive

People who love shipping products and care about outcomes excel in this world. People who love solving algorithmic puzzles for the joy of it? They struggle. The game has changed from how clever is your implementation to how well do you orchestrate systems that deliver value.

The Security Reality Check

It’s not all roses. OpenClaw has also taught the community hard lessons about agent security:

- CVE-2026-25253: A missing WebSocket origin validation that enabled remote code execution

- ClawHub supply chain attacks: 12-20% of community-uploaded skills contained malicious prompt injections

- Plaintext credential storage: API keys stored unencrypted in early versions

“Local-first” doesn’t mean “security-optional.” As agents become more autonomous, the attack surface expands. Every skill file, every WebSocket connection, every stored credential becomes a potential vector. This is an area the entire agent ecosystem needs to mature in rapidly.

Open Source as the Ultimate Moat

Perhaps the most counterintuitive lesson: open source builds a stronger moat than proprietary code when the value shifts from code to “soul.”

When code itself is a commodity that agents can regenerate at will, protecting it behind restrictive licenses becomes less meaningful. What matters is the vision, the taste, the architectural coherence, the community. These are things that can’t be copied by cloning a repo.

OpenClaw proved this. No marketing budget. No sales team. Just one person with extraordinary architectural vision, a commitment to local-first privacy, and the transparency of open source. The community showed up because the soul of the project resonated.

What This Means for You

Whether you’re a solo developer, a platform engineer, or leading an engineering org, OpenClaw’s rise carries concrete lessons:

-

Invest in architectural thinking. The returns on system design skills are skyrocketing while the returns on manual coding are plummeting.

-

Learn to orchestrate agents, not just use them. Running multiple agents in parallel with tight feedback loops is a skill. Practice it.

-

Close the loop. Any agent workflow that can’t self-verify is going to produce garbage at scale. Build in compilation, linting, and testing as non-negotiable steps.

-

Rethink retention. If you’re building tools, consider whether your users would be better served by getting in and out fast rather than being trapped in your interface.

-

Take security seriously from day one. Agent extensibility systems are attack surfaces. Treat community-contributed skills like untrusted code, because that’s exactly what they are.

-

Develop taste. Read great code. Use great products. Understand why something feels right. This is the skill that separates the human architect from the machine executor.

The era of the high-agency builder is here. One person, armed with the right architectural vision and the ability to orchestrate AI agents, can build what used to require an entire team. The barrier isn’t technical skill anymore. It’s judgment, taste, and the willingness to re-examine your assumptions weekly.

Peter Steinberger didn’t just build an open-source project. He built a proof of concept for the future of our industry.

The question is: what will you build with it?

Inspired by Nati Shalom’s analysis of the OpenClaw phenomenon and The Pragmatic Engineer’s deep dive into Peter Steinberger’s workflow. The views and synthesis in this post are my own.

Related Posts

Building a Memory-Driven AI Homelab: DGX Spark, Knowledge Graphs, and 20 Containers From Soup to Nuts

A surgical deep-dive into running an NVIDIA DGX Spark with K3s, multi-agent AI orchestration, three-layer persistent memory (QMD vector search, Graphiti knowledge graph, MuninnDB cognitive memory), and 20 Docker containers on Unraid — all wired together with MCP servers, HashiCorp Vault, Cloudflare Access, and a custom API layer.

How I Kept OpenClaw Alive After Anthropic Killed Third-Party Billing

On April 4, 2026, Anthropic silently revoked subscription billing for third-party AI harnesses. Here's the full story of how I rebuilt the request pipeline — from CLI backend to a 7-layer bidirectional proxy — to keep 13 autonomous agents running on my homelab without paying Extra Usage.

AI Orchestration for Network Operations: Autonomous Infrastructure at Scale

How a single AI agent orchestrates AWS Global WAN infrastructure with autonomous decision-making, separation-of-powers governance, and 10-100x operational acceleration.

Comments & Discussion

Discussions are powered by GitHub. Sign in with your GitHub account to leave a comment.